Breaking News: Meta's MMS Project Breaks Language Barriers with Recognition in over 1,100 Languages

Topics

- 主题1

- 主题2

- 主题3

Video summary

视频概述内容为......(300字)

Video transcript

hi guys and welcome to the voice of AI

my name is Chris Plante and some super

cool big big news from yesterday meta

have shared new progress on their AI

speech work the massively multilingual

speech project has now scaled speech to

text and text-to-speech to support over

1 100 languages which is a 10 times

increase from previous work MMS is a

game changer for the world of speech

recognition and speech generation

technology this project led by meta aims

to break down language barriers by

enabling machines to recognize and

produce speech in over 1 000 languages

covering 10 times more languages than

any existing speech recognition or

speech generation model with this

multi-model system people from all over

the world can access information and

communicate effectively in their

preferred language preserving linguistic

diversity across the globe one of the

biggest challenges in creating a

multi-modal system for speech

recognition and speech generation is

finding the vast amounts of label data

necessary for training such models

for most languages such data simply

doesn't exist making it almost

impossible to develop good quality

models for speech tasks however the MMS

project overcomes these challenges by

using the New Testament translations

which have audio recordings of people

reading the text in different languages

to create data sets of readings in an

over 1 100 languages this combined with

unlabeled audio recordings from various

Christian religious readings provides

label data for 1100 languages and

unlabeled data for nearly 4 000

languages the results of this project

shows that MMS models outperform

existing models and cover 10 times as

many languages their models also perform

equally well for male and female voices

let's have a quick look at the data okay

so the first graph shows character error

rates this is an analysis of potential

gender bias automatic speech recognition

models trained on MMS data have a SIM

error rate for male and female speakers

based on the fleur's benchmark and now

here on character error raids meta

trained multilingual speech recognition

models on over 1 100 languages as the

number of languages increases

performance does decrease but only

slightly moving from 61 to 1107

languages increases the character error

rate by only about 0.4 but it increases

the language coverage by over 18 times

if we look here in the word error rate

you can see in a light for like

comparison with open ai's whisper meta

found that models trained on MMS data

achieve half the word error rate but MMS

covers 11 times more languages this

demonstrates that their model can

perform very well compared to the best

current speech models and finally the

error rates in percentage meta trained a

language identification model for over 4

000 languages using their data sets as

well as existing data sets such as flurs

and common voice and evaluated it on the

fleurs language identification task it

turns out that supporting 40 times the

number of languages still results in a

very good performance furthermore the

MMS project provides code and models

publicly so that other researchers can

build upon their work and make

contributions to preserve the language

diversity in the world while the models

aren't perfect and run the risk of

mistranslating Select words or phrases

the project recognizes the importance of

collaboration within the AI Community to

ensure that AI Technologies are

developed responsibly this project has

the power to make significant impact on

the future of communication breaking

down the language barriers that have

historically existed and encouraging

people to preserve their languages the

vision is to create a world where

technology can understand and

communicate in any language effectively

preserving linguistic diversity while

this is really the beginning the

massively multilingual speech MMS

project represents a significant step

towards achieving this goal with the

advancement of Technology the future is

looking bright for communication across

languages and cultures

thanks for watching the video today

please don't forget to like And

subscribe and join me on the journey to

unlock the potential of AI

if you have any questions or any

feedback please leave them in the

comments section below I'll be happy to

read them so I'll see you next time and

all the very best I'm Chris from the

voice of AI cheers and bye-bye now

foreign

Dig Deeper

新的AI语音工作可以支持超过1,100种语言

昨天,Meta分享了他们在AI语音方面的新进展。他们的大规模多语种(MMS)语音项目已经将语音转文字和文本到语音的支持范围扩大到超过1,100种语言,这是以前工作的十倍增长。MMS对语音识别和语音生成技术的世界是一场革命。这个由Meta领导的项目旨在通过使机器能够识别和生成超过1,000种语言的语音来消除语言障碍,比任何现有的语音识别或语音生成模型覆盖的语言数量多10倍。有了这个多模型系统,来自世界各地的人们可以在他们首选的语言中有效地访问信息和进行沟通,从而在全球范围内保护语言多样性。

MMS项目的影响

MMS项目为语音识别和语音生成的多模型系统在多种语言环境下提供了解决方案。这种技术的影响是巨大的,特别是在客户支持方面。现在,客户支持团队可以借助MMS的帮助,更好地与全球不同语言环境下的客户进行沟通。无论客户所使用的语言是哪种,MMS都能够提供准确的语音识别和生成,从而改善客户体验。

此外,MMS项目还提供了公开的代码和模型,使其他研究人员可以在其基础上构建并为保护世界上的语言多样性做出贡献。虽然这些模型并不完美,可能会在翻译某些词语或短语时出现错误,但该项目认识到AI社区内合作的重要性,以确保负责任地开发AI技术。

致未来的沟通的重要一步

MMS项目代表了实现跨语言和文化沟通的重要一步。随着技术的进步,未来的沟通前景变得光明起来。它可以打破历史上存在的语言障碍,鼓励人们保持他们的语言,并创造一个技术可以有效理解和沟通任何语言的世界。虽然这只是一个开始,但MMS项目对于实现这一目标迈出了重要的一步。

谢谢观看今天的视频,请不要忘记点赞和订阅,并加入我一起发掘人工智能潜力的旅程。如果您有任何问题或反馈,请在下方评论区留言,我很愿意阅读。再次见到你,祝一切顺利!

视频描述

Well, here is some super exciting news from yesterday! Meta's Massively Multilingual Speech project has made impressive progress in enabling machines to recognize and produce speech in over 1,100 languages, covering ten times more languages than any existing speech recognition or speech generation model. It's heartening to see that Meta is working towards breaking down language barriers and enabling people from all over the world to access information and communicate effectively in their preferred language.The MMS project has addressed a significant challenge in creating a multi-modal system for speech recognition and generation by using Bible translations to create a dataset of readings in over 1,100 languages, combined with unlabeled audio recordings from various religious readings, providing labeled data for 1,100 languages and unlabeled data for nearly 4,000 languages.

What's exciting is that Meta has provided code and models publically so that other researchers can build upon their work and make contributions to the preservation of language diversity. This is an important step towards creating a world where technology can understand and communicate in any language, effectively preserving linguistic diversity. Overall, the Massively Multilingual Speech (MMS) project represents a significant step towards achieving this goal.

The Massively Multilingual Speech (MMS) project is an incredible advancement in the field of artificial intelligence and language technology. The project's ability to recognize and produce speech in over 1,100 languages is revolutionary and has the potential to make a significant impact on how people communicate across the world.

One of the most significant challenges in creating a multi-model system for speech recognition and speech generation is finding the vast amounts of labeled data necessary for training such models. The MMS project overcomes this challenge by using the New Testament translations, providing labeled data for 1,100 languages and unlabeled data for nearly 4,000 languages. The results of this project show that the MMMS models outperform existing models and cover ten times as many languages.

This project has the power to make a significant impact on the future of communication, breaking down the language barriers that have historically existed and encouraging people to preserve their languages. The ability to communicate effectively in any language will positively affect global commerce and cultural exchange.

Moreover, the MMS project provides code and models publicly so that other researchers can build upon their work and make contributions to the preservation of language diversity in the world. The project recognizes the importance of collaboration within the AI community to ensure that AI technologies are developed responsibly, which is crucial for promoting trust and avoiding unintended consequences.

The MMS project represents a significant step towards achieving the goal of creating a world where technology can understand and communicate in any language, effectively preserving linguistic diversity. It holds the potential to change the way the world thinks about cross-linguistic communication. With the advancements of technology, the future is looking bright for communication across languages and cultures.

In conclusion, the MMS project signifies a fantastic achievement in the field of artificial intelligence and language technology. It will undoubtedly impact the future by breaking down language barriers and promoting linguistic diversity. It is exciting to think about the potential positive implications of this project and other advancements in AI technology in facilitating an inclusive global conversation.

Source:https://ai.facebook.com/blog/multilingual-model-speech-recognition/?utm_source=twitter&utm_medium=organic_social&utm_campaign=blog&utm_content=card

Source: Github: https://github.com/facebookresearch/fairseq/tree/main/examples/mms

Source Meta Video: https://twitter.com/i/status/1660722199395704852

Source: FLEURS: Few-shot Learning Evaluation of Universal Representations of Speech

https://arxiv.org/abs/2205.12446

Source: Robust Speech Recognition via Large-Scale Weak Supervision: https://arxiv.org/abs/2212.04356

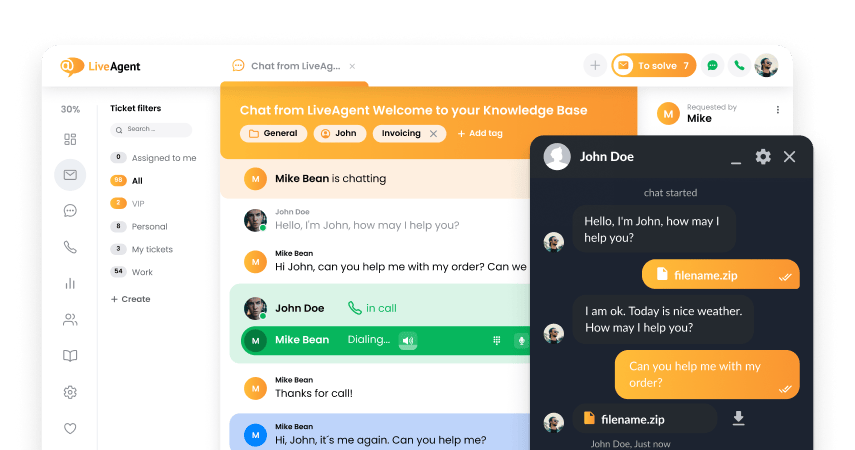

You will be

in Good Hands!

Join our community of happy clients and provide excellent customer support with LiveAgent.

我们的网站使用cookies。点击继续我们将默认为您允许我们将cookies部署在我们的网站上。 隐私和cookie政策.

- How to achieve your business goals with LiveAgent

- Tour of the LiveAgent so you can get an idea of how it works

- Answers to any questions you may have about LiveAgent

Български

Български  Čeština

Čeština  Dansk

Dansk  Deutsch

Deutsch  Eesti

Eesti  Español

Español  Français

Français  Ελληνικα

Ελληνικα  Hrvatski

Hrvatski  Italiano

Italiano  Latviešu

Latviešu  Lietuviškai

Lietuviškai  Magyar

Magyar  Nederlands

Nederlands  Norsk bokmål

Norsk bokmål  Polski

Polski  Română

Română  Русский

Русский  Slovenčina

Slovenčina  Slovenščina

Slovenščina  Tagalog

Tagalog  Tiếng Việt

Tiếng Việt  العربية

العربية  English

English  Português

Português